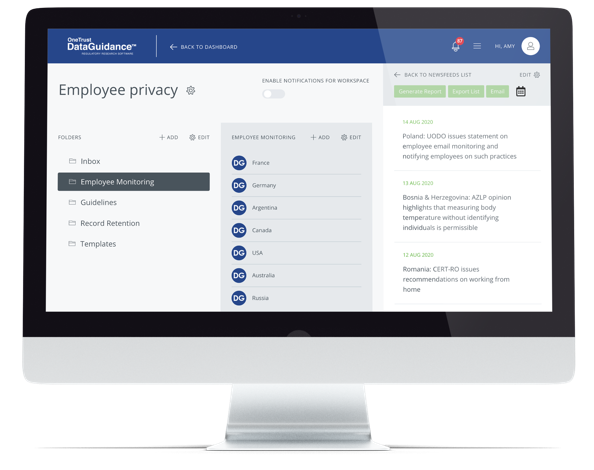

Continue reading on DataGuidance with:

Free Member

Limited ArticlesCreate an account to continue accessing select articles, resources, and guidance notes.

Already have an account? Log in

UK: Government issues response to Communication and Digital Committee report on LLMs and generative AI

On May 2, 2024, the House of Lords' Communications and Digital Committee (the Committee) published the Government's response to the Committee's report on large language models (LLMs) and generative artificial intelligence (AI). The response largely agrees with the report's recommendations for balancing the opportunities and risks of AI.

Risks of AI

In its response, the Government states that the risks and uncertainties associated with AI require responsible development and deployment. In this regard, the response outlines safety measures that have been implemented including the establishment of the Central AI Risk Function (CAIRF) and the AI Safety Institute (AISI), both aimed at mitigating potential negative impacts on society.

Fair competition

Furthermore, the Government's response addresses the need for transparency and fair competition, echoing the Committee's concerns about market power concentration and the risks of regulatory capture. The response emphasizes maintaining open competition and safeguarding public and societal interests in the digital economy.

Regulatory strategy

In terms of regulatory strategy, the response argues for a balanced approach that does not solely focus on AI safety but also encourages commercial opportunities. The response notes that this approach involves a principles-based regulatory framework tailored to the risks and opportunities presented by AI in different sectors.

IP and AI

The response addresses the relationship between AI and copyright law, acknowledging the challenges in adapting existing intellectual property (IP) laws to AI-generated content. The response supports a balanced approach that protects creators' rights while facilitating AI-driven innovation in the creative sectors. In the response, transparency in how AI models are trained and how outputs are attributed is noted as crucial for maintaining trust and accountability in AI development.